Disruption

- Update (13:04): We are currently investigating an incident affecting core routers at MA3.

- Update (13:37): The issue has been identified and fixed and we are in the process of restoring connectivity on individual customer stacks.

- Update (14:39): The majority of affected customer stacks are online, where we are working through the remaining affected stacks.

- Update (16:25): All services are now fully restored. Customers are advised to raise an emergency ticket if they are still experiencing issues.

Post-Mortem

Our report from the incident is as follows.

Issue

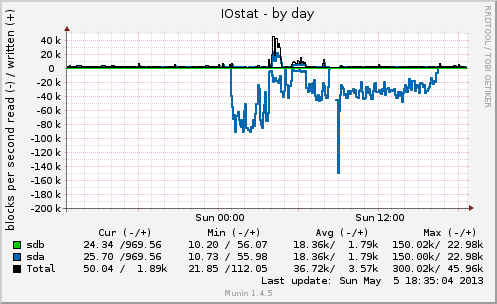

Loss of connectivity, high load and periods of unavailability for the entire MA3 facility.

Outage Length

The duration was between 55 to 95 minutes.

Underlying cause

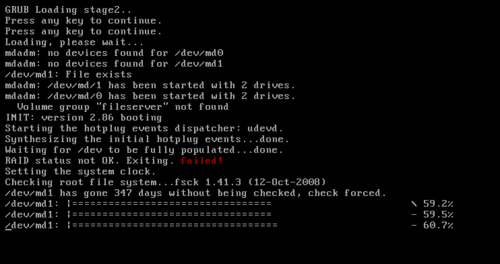

A surge in CPU load on both core routers caused disruption in control plane traffic, where forwarding and routing were operating without issue.

Customer stacks feature two network interfaces, attached to two, diverse switching and routing networks; the active interface is selected by performing an reachability check (using ARP) to its respective gateway. As the core routers were not responding to control plane traffic, ARP requests were being dropped, resulting in stacks taking both primary and secondary interfaces offline; ultimately severing all connectivity.

HA stacks were further affected by this issue, where the shut down interfaces lead to each member of the cluster not being able to reach each other and a "split brain" scenario occurring. Even when connectivity was restored, manual intervention was required to address the split brain.

Symptoms

Our facilities monitoring, and service monitoring probes immediately reported the incident. Customers would have experienced slow page load times through to a completely inaccessible site.

Resolution

A malfunctioning aggregation switch appeared to be the source of increased CPU load throughout the network; rebooting the affected switch was sufficient to allow CPU loads to drop and for stacks to "bring up" their network interfaces after having a successful ARP check.

Prevention

We have identified several areas in which improvements can be made,

- In the event of all interfaces failing ARP checks; interface monitoring should revert back to "MII" monitoring (Layer 1, versus L3). Should this incident happen again, all stacks will restore their connectivity within 60 seconds; greatly reducing the impact and subsequent downtime.

This fix has already been deployed.

- Only a single ARP target was previously set (the redundant gateway), which lead to all ARP traffic being sent to a single core router. This has been expanded to include the second core router and where applicable, other HA cluster members. This should greatly reduce the event of HA failover and subsequent split-brain occurring.

This fix has already been deployed.

In addition to the above; deploying newer generation aggregation switches and core routers will go a long way towards addressing control plan capacity. Substantial investment in extremely high capacity, latest generation hardware, has already been made and the timeline for replacement of existing hardware will be brought forwards.

]]>